World War 1 Solders — http://historyinphotos.blogspot.com/2012/06/world-war-i-ctd.html

Pre-hire assessments of job applicants have been used and studied for decades, and evolved out of general psychological testing. To understand them, we need to understand the evolution of personality assessments more generally.

The US Government is credited with developing the first modern personality test in 1919. It’s purpose was to help screen out soldiers during World War 1 who were more likely to experience ‘Shell Shock’ (or PTSD in modern language).

The goal of the early psychologists working on these first tests, and in the early ‘personality measurement’ decades that followed, was to build an ‘x-ray’ of the inner self. However, early methodologies lacked standardization and many findings were invalidated by later generations, casting doubts that linger to this day on the validity and usefulness of the entire field of personality testing in general.

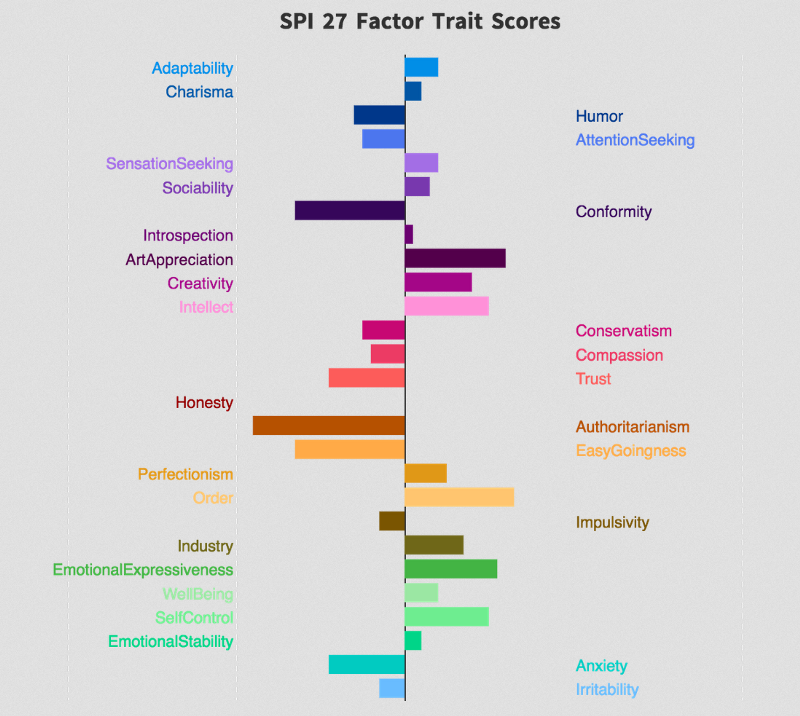

In modern times, we have seen the wide-spread adoption of newer, more consistent ‘factor’ based frameworks and personality testing methods such as the Big 5 Personality dimensions and the HEXACO model (Humility, Emotionality, Extraversion, Agreeableness, Conscientiousness and Openness).

These tests have more stable measures at the individual psychological ‘trait’ level, and also show greater distinctness of these traits from each other, as well as stability of these traits within people over time. Factor validity within and across cultures has been established. And these tests have become the modern ‘gold standards’ for basic personality assessment and pre-hire assessments in general.

A more ‘modern’ psychology test. https://sapa-project.org/

With these more consistent and reliable modern shared methods of measuring personality, we have also seen the evolution of modern ‘meta-analytic’ techniques for combining studies using similar underlying instruments. These two advances have allowed for more confidence in finding effect sizes.

So, what have we found? Do these personality tests actually predict anything worth predicting when used in pre-hire assessment?

Yes.

In fact, personality is often a powerful predictor of future behaviors — even personality measures taken with very ‘simple’ tests at a single point in time.

Dozens of metastudies looking at decades of work across dozens of areas support this.

For example, here are the results of a leading study into predicting academic performance — using the ‘Big 5’ model. The cumulative sample sizes were over 70,000.

Predicting academic performance

Academic performance was found to correlate significantly with Agreeableness, Conscientiousness, and Openness. Where tested, correlations between Conscientiousness and academic performance were largely independent of intelligence. When secondary academic performance was controlled for,

Conscientiousness added as much to the prediction of tertiary academic performance as did intelligence.

Predicting work performance

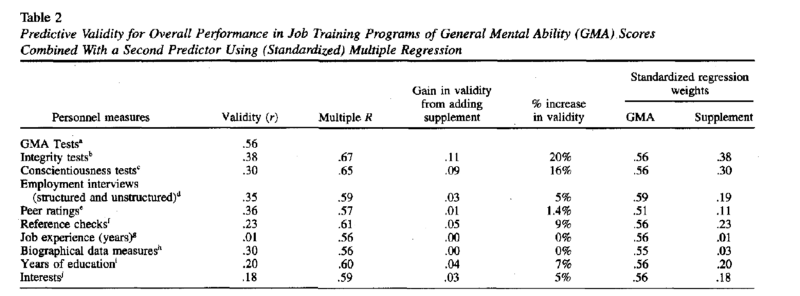

The most widely cited paper in assessing this question is a very large meta-study that came out in 1998. This paper found that pre-hire assessment works across a wide variety of job types.

The Validity and Utility of Selection Methods in Personnel Psychology:

Practical and Theoretical Implications of 85 Years of Research Findings. Hunter and Schmidt, 1998.

In particular, General Mental Ability (GMA) tests have more validity than just about anything else you can do (slightly surpassed as a single factor only by well-designed work trial tests). And when these tests of General Mental Ability are combined with well-constructed personality tests such as integrity and conscientiousness, their validity increases significantly — so much so that they can predict as much as roughly half of the total variance (the R squared of the multiple R column) across decades of studies and many types of jobs.

Other meta analysis looking at other features of ‘worker performance’ have similar findings. For example, this large meta-study on predicting absenteeism from personality.

Meta-analysis was applied to studies of the validity of pre-employment integrity tests for predicting voluntary absenteeism. Twenty-eight studies based on a total sample of 13,972 were meta-analysed. The estimated mean predictive validity of personality-based integrity tests was 0.33. This operational validity generalized across various predictor scales, organizations, settings, and jobs (SD 0.00).

The results indicate that pre-hire assessments can be effective in reducing absenteeism in organizations, particularly if personality-based integrity tests are utilized.

But how we measure traits is just one part of the puzzle. What do we do with them once we’ve collected them?

For this, we can look at modern research into the effectiveness of algorithms vs. people in general, across fields.

CAN ALGORITHMS BEAT PEOPLE?

The broader findings here in dozens of fields covering decades of research and hundreds of studies, are very clear. People, including ‘lower skilled’ people or ‘beginners’ armed with smart algorithms, consistently and uniformly beat or tie experts in hundreds of skills previously believed to require decades of expertise.

Below is a quote from one of the most widely cited studies in this area.

On average, mechanical-prediction techniques were about 10% more accurate than clinical predictions. Superiority for mechanical-prediction techniques was consistent, regardless of the judgment task, type of judges, judges’ amounts of experience, or the types of data being combined. Clinical predictions performed relatively less well when predictors included clinical interview data. These data indicate that mechanical predictions of human behaviors are equal or superior to clinical prediction methods for a wide range of circumstances. (Source 6)

The studies in this area generally suggest that humans are still very good at collecting data, but we tend to get less good (relative to machines) when we have to combine large volumes of data and assign weights. This is where machines are, generally speaking, better.

Many people and organizations resist this finding. But, it is very robust and validated across decades now, in dozens of fields.

HR is no different. In fact, one of the most widely cited studies, conducted by the National Bureau of Economic Research demonstrates this.

People want to believe they have good instincts, but when it comes to hiring, they can’t best a computer. Hiring managers select worse job candidates than the ones recommended by an algorithm, new research from the National Bureau of Economic Research finds. Looking across 15 companies and more than 300,000 hires in low-skill service sector jobs, such as data entry and call center work, NBER researchers compared the tenure of employees who had been hired based on the algorithmic recommendations of a job test with that of people who’d been picked by a human. The test asked a variety of questions about technical skills, personality, cognitive skills, and fit for the job. The applicant’s answers were run through an algorithm, which then spat out a recommendation: Green for high-potential candidates, yellow for moderate potential, and red for the lowest-rated. (Source #5).

And most of these studies relied solely on very ‘primitive’ forms of data modeling.

NEW MODELING TECHNIQUES GIVE NEW POWER IN COMBINING TRAITS INTO PREDICTIONS

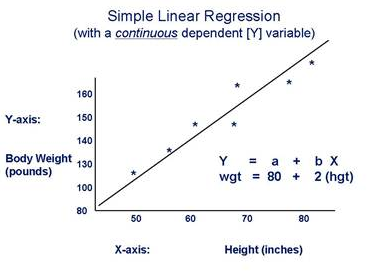

What does all this mean for predicting human performance and future actions from personality? The answer is — we don’t know for sure, but probably a lot. The wide majority of the past historical papers that look at personality assessment and subsequent prediction of work performance relied primarily on linear regression or multiple linear regression as the dominant forms of ‘data analytic’ technique.

Simple linear regression model.

Linear regression is very, very unlikely to be the best method for a whole host of reasons. One of the major ones is the simple fact that many of these traits are not linear. For example, salespeople don’t get increasingly better with increasing extraversion in a simple ‘more is better’, or linear fashion. There are, even for what is thought of as ‘outgoing’ jobs and the most ‘extraverted’ positions, ‘bands’ that define the ideal worker — and these bands differ in different companies. Here’s a quote from a more modern paper on this.

Despite the widespread assumption that extraverts are the most productive salespeople, research has shown weak and conflicting relationships between extraversion and sales performance. In light of these puzzling results, I propose that the relationship between extraversion and sales performance is not linear but curvilinear: Ambiverts achieve greater sales productivity than extraverts or introverts do. Because they naturally engage in a flexible pattern of talking and listening, ambiverts are likely to express sufficient assertiveness and enthusiasm to persuade and close a sale but are more inclined to listen to customers’ interests and less vulnerable to appearing too excited or overconfident.

A study of 340 outbound-call-center representatives supported the predicted inverted-U-shaped relationship between extraversion and sales revenue. (Source 15).

It is now be possible to collect much larger amounts of data and find ‘unique’ and ‘personalized’ insights into them that are much more ‘custom’ and predictive than the general models of the past.

These methods rely on more advanced ‘machine learning’ modeling techniques — techniques that are being used to do things like recommend products you might want to also buy on Amazon, movies you might like on Netflix and people you might fall in love with on Match.com.

THE EVOLUTION OF TALENT ANALYTICS & PRE-HIRE ASSESSMENTS

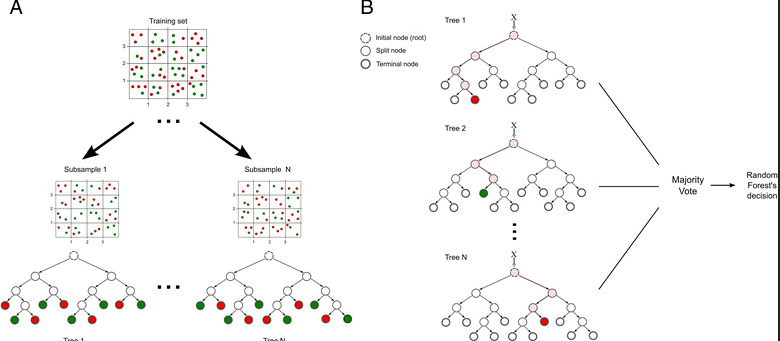

It is likely that we can learn much more — perhaps orders of magnitude more — from personality traits with more modern forms of data modeling than was ever possible in the past. The fields of data analysis and predictive modeling have advanced significantly and are able to find and model more complex interactions in large data sets.

Modeling methods such as deep learning, neural networks and ensembles of random forests are better, more reliable and more stable than traditional linear modeling in many cases, and they will ultimately prove to be better when it comes to the types of data now (or soon) to be available with the human assessment space.

It is also very likely that humans have several inherent ‘biases’ in our decision making process that make decision making in hiring that is solely done by humans inherently risky, non-optimal and potentially open the doors to litigation. This has been documented in hundreds of studies around responses to resumes with different names, along with court sentencing by race, and the Implicit Association Test. While the IAT has come under some fire in recent years for failing to lead to real-world predictions, the sum total of the evidence around implicit bias is large and growing.

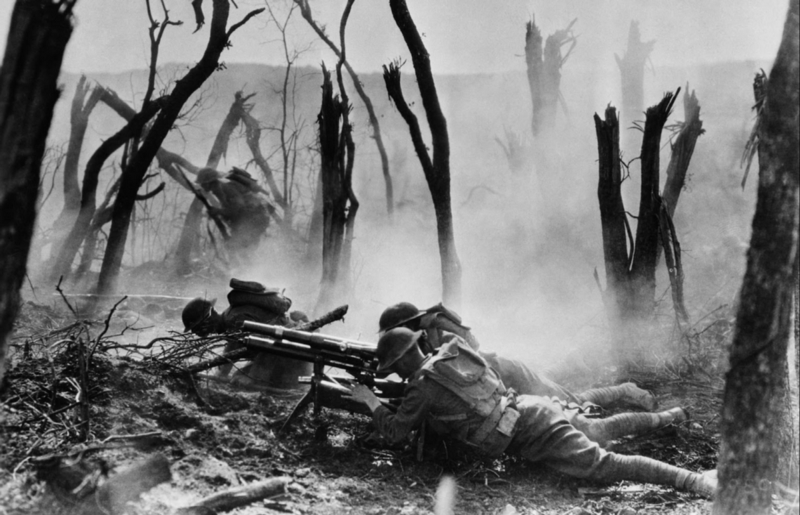

THE BUSINESS CASE FOR ‘CUSTOM’ TALENT ANALYTICS

There are real business advantages for getting this right, and huge costs to getting this wrong. The largest benefit is a culture that attracts the best people consistently.

Brand Equity

Companies using best-practices in hiring, are more likely to create the talent pools and cultures that are more creative, enduring and successful.

Consider the following research done at the city level — and showing that more diverse, tolerant cities (captured here by what is called the ‘Bohemiam Index’) are more creative:

High concentrations of ‘bohemians’ are also strongly linked to a metropolitan area’s high-technology success, as our bohemian index shows (table 1, column 3). Ten of the top 15 bohemian metro areas also rank among the nation’s top 15 high-tech areas, notably Seattle, Los Angeles, New York, Washington, San Francisco, and Boston. (Source 11)

Or the results at the company level from people like Forbes who look at this.

A diverse and inclusive workforce is crucial for companies that want to attract and retain top talent. Competition for talent is fierce in today’s global economy, so companies need to have plans in place to recruit, develop,

and retain a diverse workforce. (Source 10).

The largest costs to getting it wrong are likely a) turnover costs, b) discrimination lawsuits, c) lost brand equity and potential lost attractiveness moving forward in terms of attracting top talent, positive PR, growth company multiples and customer goodwill.

What does all of this mean in terms of assessing people moving forward?

It means, that we have now reached a point where smart, forward thinking company leaders will likely no longer be able to justify not using the best assessments and data analytics models.

Machines and people working with them will be better, more transparent, more accountable, more consistent and (likely) reliably less biased than people moving forward.

Talytica aims to be the leader in this emerging field. Our mission is to make hiring fair, simple and effective through the application of best practices in assessment science and analytics.

SOURCES

- http://historyinphotos.blogspot.com/2012/06/world-war-i-ctd.html

- Margaret Talbot, The Rorschach Chronicles, N.Y. TIMES, Oct.

- 17, 1999, at 28–29.

- Rorshach test. https://psychcentral.com/lib/rorschach-inkblot-test/

- The Validity and Utility of Selection Methods in Personnel Psychology:

- Practical and Theoretical Implications of 85 Years of Research Findings. Hunter and Schmidt, 1998.

- “Machines Are Better Than Humans at Hiring the Best Employees.” By Rebecca Greenfield

- In 2000, Grove, Zald, Lebow, Snitz and Nelson published, “Clinical Versus Mechanical Prediction: A Meta-Analysis” (a copy of which can be found here: http://datacolada.org/wp-content/uploads/2014/01/Grove-et-al.-2000.pdf)

- Center for American Progress. “The Costly Business of Discrimination.” 2012. Crosby Burns.

- The SAPA Project. Hexaco test. https://sapa-project.org/blogs/HEXACOmodel.html

- Random Forest Model. https://www.researchgate.net/figure/280533599_fig5_Figure-2-Random-forest-model-Example-of-training-and-classification-processes-usin

- Fostering Innovation Through a Diverse Workforce — Forbes

- https://www.brookings.edu/articles/technology-and-tolerance-diversity-and-high-tech-growth/

- A Meta-Analysis of the Five-Factor Model of Personality

- and Academic Performance. Arthur Poropat. 2009. Psychological Bulletin.

- Personality and Absenteeism: A Meta-Analysis of Integrity Tests.

- DENIZ S. ONES*, CHOCKALINGAM VISWESVARAN

- and FRANK L. SCHMIDT. European Journal of Personality. 2003.

- A short history of machine learning. Bernard Marr. https://www.forbes.com/sites/bernardmarr/2016/02/19/a-short-history-of-machine-learning-every-manager-should-read/#143dafed15e7

- Rethinking the Extraverted Sales Ideal: The Ambivert Advantage. By Adam Grant. Downloaded from: https://www.researchgate.net/publication/236138720_Rethinking_the_Extraverted_Sales_Ideal_The_Ambivert_Advantage